Hate speech has been defined as “speech which attacks a person or group on the basis of attributes such as race, religion, ethnic origin, sexual orientation, disability, or gender.” And yes, as strange as it sounds, hate speech by that definition is still protected under the First Amendment, confirmed only last year by the Supreme Court. The exception only comes if the speech is direct, personal, and threatening or ‘violently provocative’ — then, it loses its free speech protection.

As you can see, that leaves a lot of room for the general spewing of hate.

The good news, of course, is that businesses can impose more strict guidelines. Hate speech is grounds for immediate dismissal at many companies, even if it won’t get you arrested. In theory, most social media platforms also prohibit hate speech of any kind. But as anyone who has dared to read the comment section in any article will tell you, those policies don’t exactly hold up. Recently, companies like Facebook, Twitter, and YouTube have claimed that they’re cracking down on hate speech in order to keep up with EU regulations. Germany has even passed legislation on it, and the EU has threatened to do the same if these companies don’t improve. So they’re trying to be better. In theory.

Unfortunately, there are still some serious problems.

It would be one thing if they just couldn’t keep up with the demand. If there were just too many reports of hate speech, and they didn’t have the manpower to attend to all of them. Granted, that’s part of the issue, sure (they are tiny companies, after all, with such limited resources!). But what about the reports they actually do answer — by promptly declaring the hate speech as ‘A-Okay’?

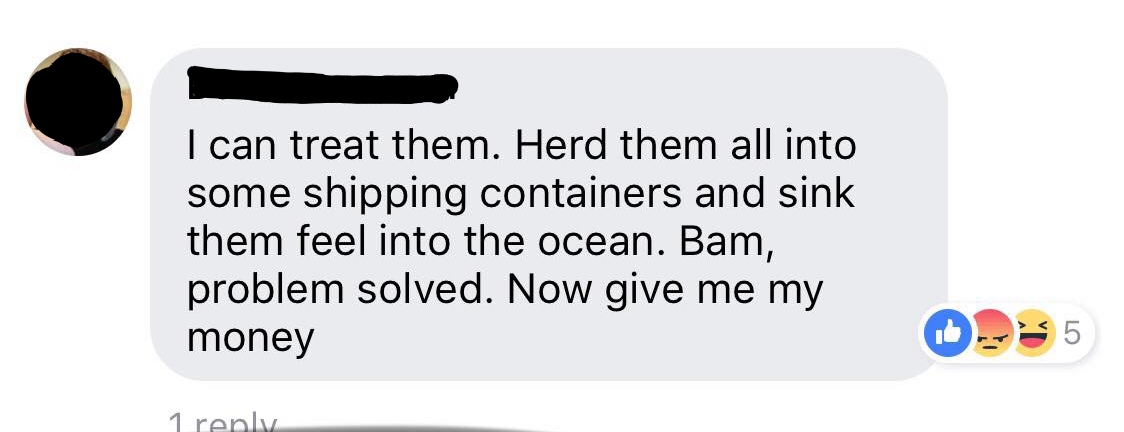

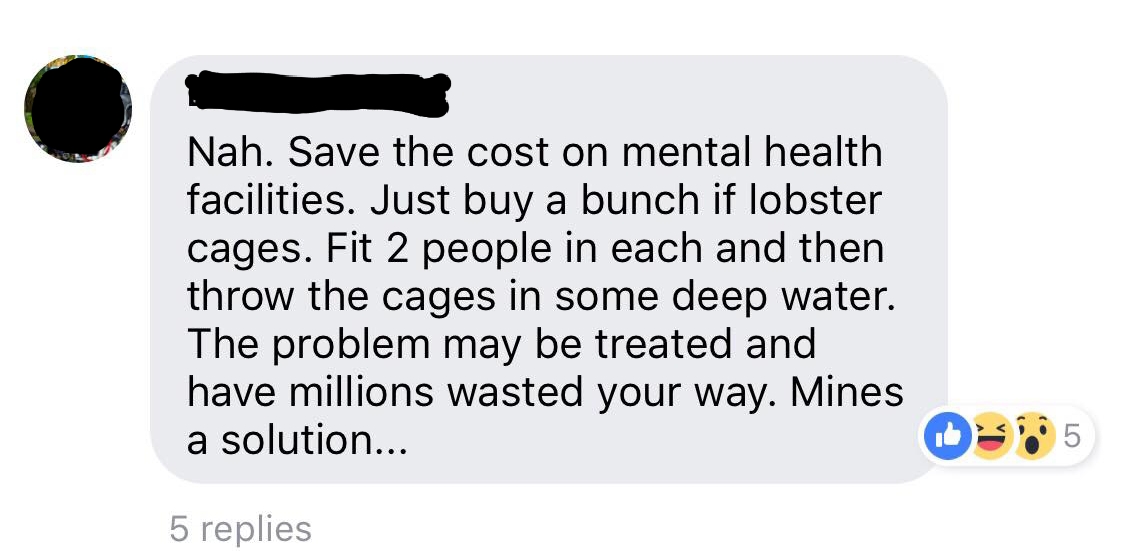

In January, this article circulated among Washington State locals. The headline was “Lawmakers want to put $500 million for mental health facilities up for a vote.” Among a hearty debate in the comments on Facebook, two statements in particular stood out:

Both users suggest a specific (and oddly similar) strategy involving the mass murder of people with mental illness. Each of these users was reported for these specific comments, and Facebook replied to each report the same way: “We reviewed the comment you reported and found it doesn’t violate our Community Standards.” These are, presumably, the same community standards that explicitly say:

“Facebook removes hate speech, which includes content that directly attacks people based on their:

- Race,

- Ethnicity,

- National origin,

- Religious affiliation,

- Sexual orientation,

- Sex, gender, or gender identity, or

- Serious disabilities or diseases.”

Check that last one. Serious disabilities or diseases, which includes mental health. If comments like this, which specifically outline methods of violence against a group with a disability, aren’t considered a direct attack, then what is?

Some might write this off as a tasteless joke, but far too many deadly threats have been veiled under the guise of “It was just a joke! Lighten up!” Try imagining theses ‘jokes’ as referencing you, and see whether they seem threatening or not. Whether or not the statements were intended to be funny, they clearly still do fall under Facebook’s own definition of hate speech.

This is just one example, but there are many, many more. Social media platforms claim to prohibit hate speech, and yet when it comes right down to doing anything meaningful, like removing users who practice it, they balk. Why?

Remember, this isn’t a First Amendment issue. As it stands now, hate speech is very clearly protected under the First Amendment. But, again, private companies have the ability to impose their own guidelines — and these social media platforms claim to prohibit hate speech. Personally, I don’t want to be a part of a business or platform that allows hate speech. Let those screams go fester in their own online forum somewhere else. When are Facebook, Twitter, and Youtube going to actually start cracking down? Or were those claims only for our EU neighbors? What exactly is the disconnect here?

I’m not sure we’ll ever get an answer on that.

Have you ever experienced hate speech online? Did you report it? How did the company respond?